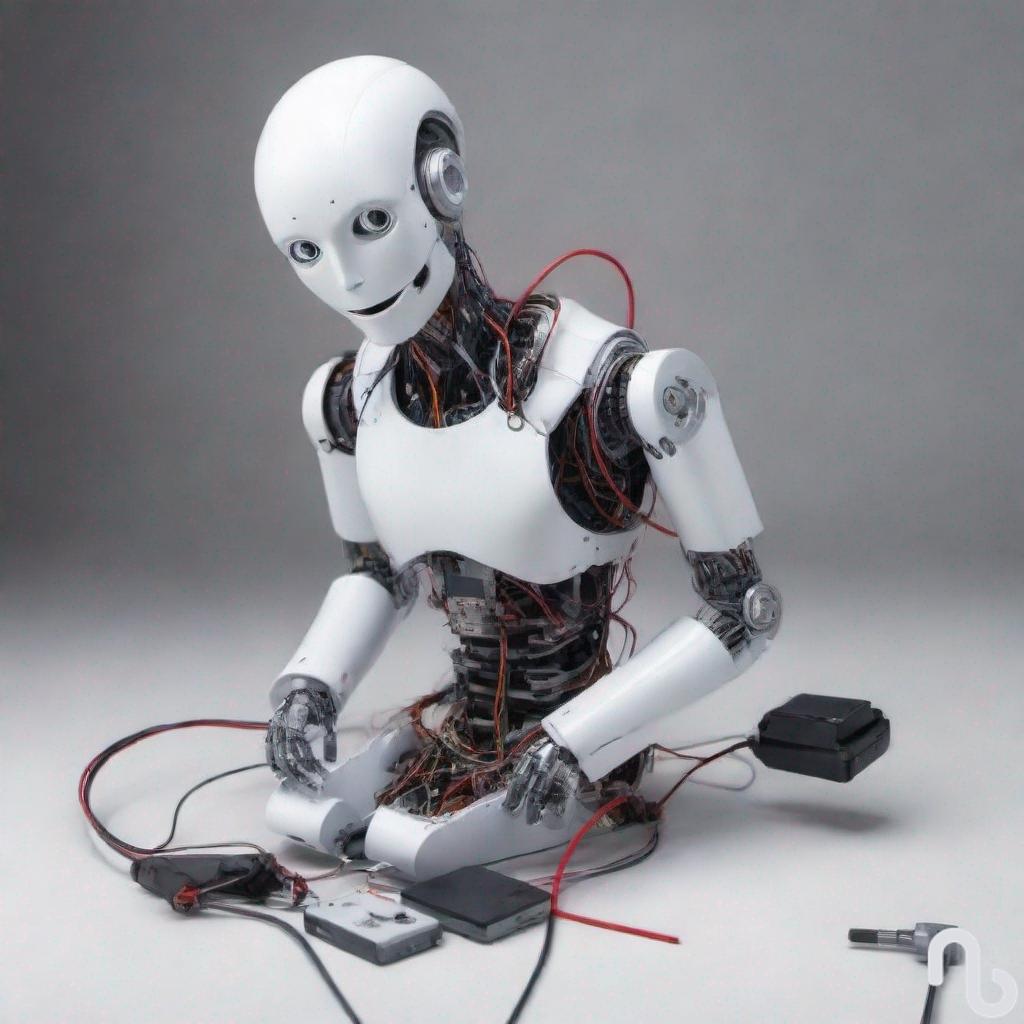

Another missing puzzle piece has come to my attention. It has been difficult to articulate why robots like iCub don’t understand the world around them, as it seems its body provides sensory data to ground the meanings of words like ‘cup’ and ‘shovel’. The cameras which operate as its eyes take in visual information to be associated with language, and yet Dr. Haikonen repeatedly stresses that they do not understand what the words mean. Computers, including the one controlling iCub’s body, only work with syntax, or the rules which govern how sentences are formed. As such, incoming sensory data doesn’t really ground the words it learns because its sensory data just consists of more symbols; it’s just symbols all the way down. The 1’s and 0’s which constitute its sensory data do not contain any semantic information about words, since these aren’t experiences but simply representations of experiences.

Aren’t bodily sensations just neural representations of external stimuli, and thus consisting of representations made up of binary values? Perhaps, but animal physiology is physically and functionally arranged in a manner which generates meaning; what does the bee sting mean to me, as a living body capable of being damaged and ultimately dying? For a robot like iCub, damage means nothing, it doesn’t care whether its arm is removed or it gets hit in the head. Its architecture doesn’t allow for meaning to be generated, it’s just a computer that looks like a human. Could iCub’s architecture be modified in such a way which includes pain signals to generate meaning? Arguably no, for reasons I will now attempt to articulate. I’m still working on a satisfactory explanation.

The passage which caught my attention is from Dr. Haikonen’s book The Cognitive Approach to Conscious Machines. I will provide the block quote because summarizing it would remove its flavour, an essence which helps illustrate the issue I’ve been wrestling with.

I make here a bold generalization and claim that syntax cannot be completely separated from semantics. Every now and then vertical grounding to inner imagery and percepts of the external world is needed in order to resolve the meaning of a sentence. Therefore proper perception of external world entities and their relationships and the formation of respective inner representations are absolutely necessary. A syntactic sentence is a structured description of a given situation. If the system is not able to produce and properly bind all the required percepts then it will not be able to produce a proper verbal description either and vice versa, the system will not be able to decode the respective sentence. Artificially imposed rules alone will not solve every problem here.1

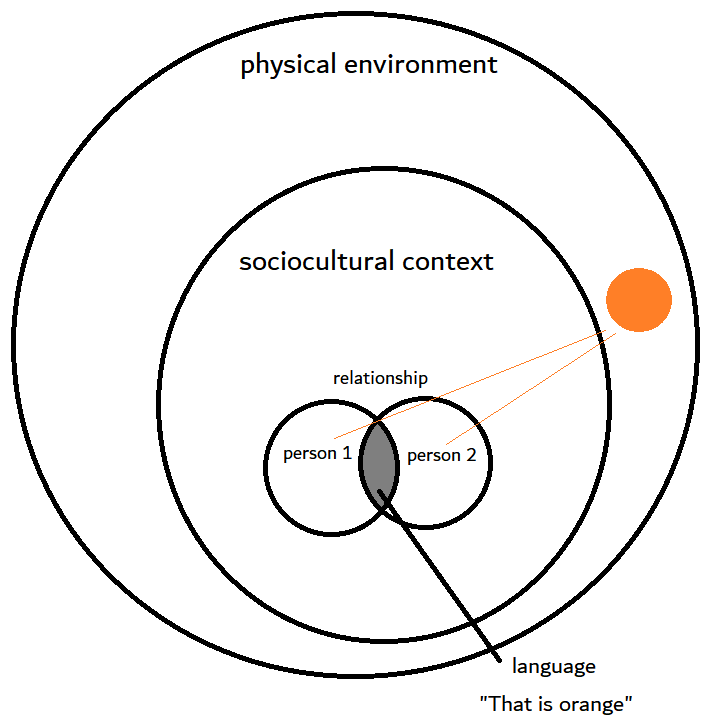

To clarify that last sentence, this is the case due to reasons Rosen discusses, that formal systems, in this case syntax, create an abstraction from the natural systems referred to in semantics. Rules alone do not completely represent the natural systems they aim to model. As Haikonen states, syntax provides a structured description of a situation, where these situations are necessarily part of natural systems. By “vertical grounding,” Haikonen means the associations between percepts or sensory information and the words used to describe them.

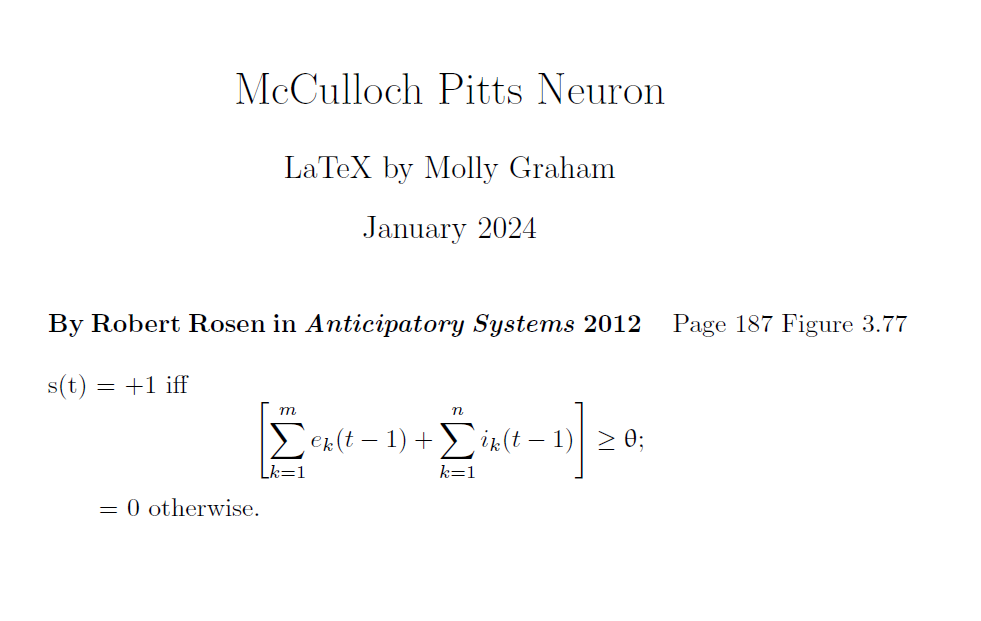

Immediately I can see that indeed, semantics cannot be completely represented by syntax or formal rules, given my familiarity with Rosen’s explanation in Anticipatory Systems. I can also see, however, a hoard of philosophy of language professors taking issue with this claim. Which ones I do not know, I only know one such professor and he may not be sure whether the generalization is true, or in which cases it might be false. I’ll have to ask him and see what he says. Either way, it is a bold claim and a difficult one to explain; Rosen appeals to Category Theory from pure mathematics to do so, but for those who are uninterested or put off by mathematical theory, it might not provide a very compelling explanation.

Computerized robots like iCub are rule-based structures all the way down, and even if they were to be provided with a means to care about its own body and survival, its interest would remain a simulation. The numerical representations which generate its “experiences” are fundamentally distinct from the analogue representations generated by the human body. By analogue, I mean the various physiological systems which give rise to phenomenal experience. After all, feelings of hunger may be represented by neuronal activity, but they are ultimately generated from the hormone ghrelin. Since hormones are chemical messengers carried throughout the body via the bloodstream, their functionality is distinct from neural networks.2

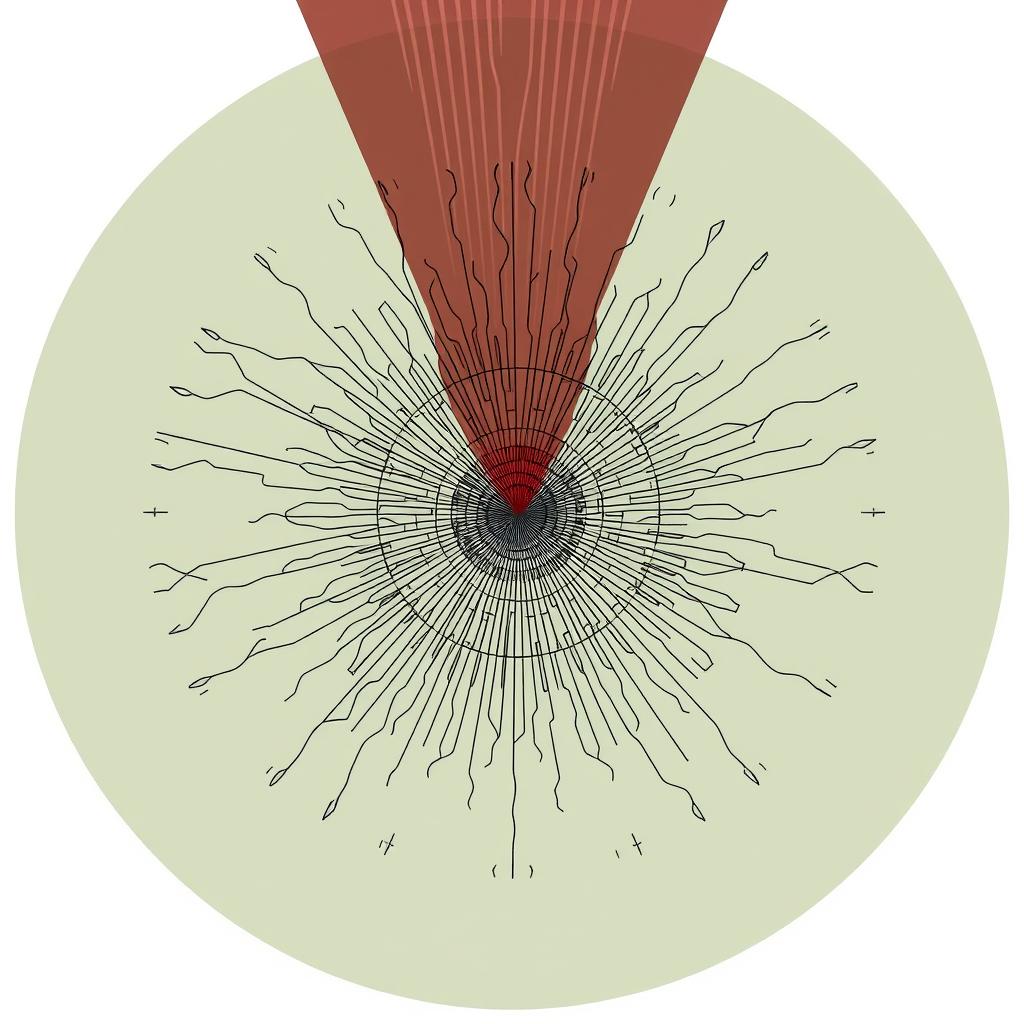

The reason is because analogue signals are continuous streams of information,3 where continuous refers to the numerical values between whole integers, such as infinitesimal fractions or decimal values. In contrast, modern computers use symbolic or representational methods to generate behaviours, where digital channels pass streams of information which are discrete without intermediate values between whole integers.4 Consequently, digital machines count quantities while analogue machines measure quantities.5 The distinction is significant, and though the all-or-nothing firing patterns of neurons can be represented by binary values, the body and its phenomenal experiences cannot be fully reduced to a collection of neural signals.

So what is this missing piece I stumbled upon? “If the system is not able to produce and properly bind all the required percepts then it will not be able to produce a proper verbal description either and vice versa…” The words and sentences we say have meaning because the rules of language are coupled with the meanings of the words we use. iCub may state “the truck is red” but it doesn’t understand because its version of ‘red’ is without semantic content. The word ‘red’ is not fully grounded because the sensations it appeals to are still symbols made up of discrete values. Instead, analogue signals are required to fully capture what-it-is-like to experience some stimulus, where these signals influence the system’s self-organizing behaviour for the sake of continued survival.

Works Cited

1 Pentti O. Haikonen, The Cognitive Approach to Conscious Machines (UK: Imprint Academic, 2003), 238–39.

2 Mark Andrew Krause et al., An Introduction to Psychological Science: Modeling Scientific Literacy (Pearson Education Canada, 2014), 102.

3 John Johnston, The Allure of Machinic Life: Cybernetics, Artificial Life, and the New AI (The MIT Press, 2008), 28, https://doi.org/10.7551/mitpress/9780262101264.001.0001.

4 Johnston, 28.

5 Norbert Wiener, The Human Use of Human Beings: Cybernetics and Society, 2d ed. rev. (Garden City, NY: Doubleday, 1954), 64.