I am reading Autopoiesis and Cognition by Humberto Maturana and Fransisco Varela for my thesis, and a significant connection has leapt out to me from page 10. This section is written by Maturana, and his fourth point about living systems states:

“Due to the circular nature of its organization a living system has a self-referring domain of interactions (it is a self-referring system), and its condition of being a unit of interactions is maintained because its organization has functional significance only in relation to the maintenance of its circularity and defines its domain of interactions accordingly.”

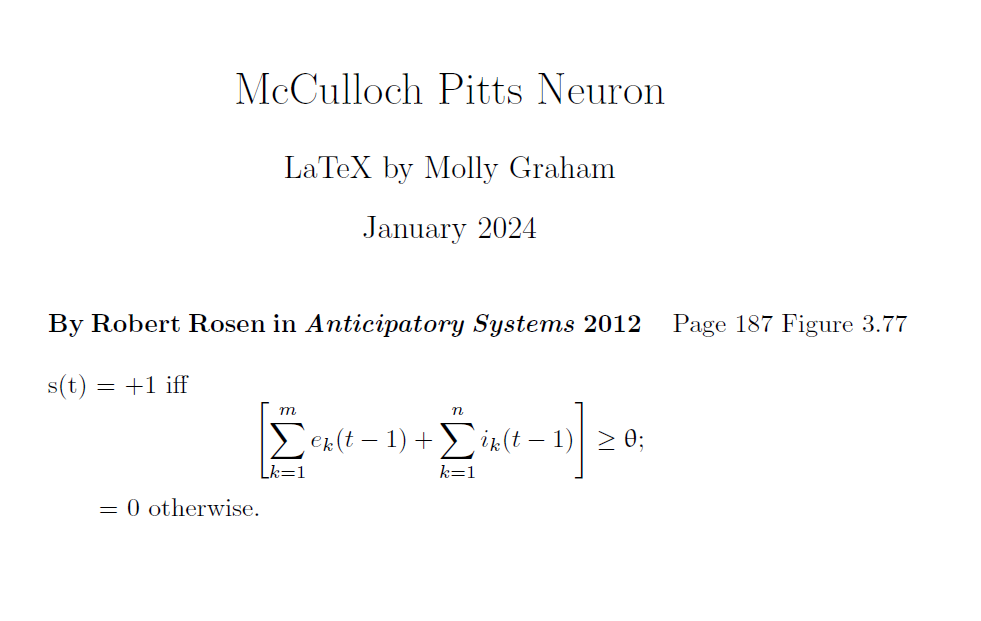

This passage expands upon the nugget of wisdom supplied by Kurt Gödel as appealed to by Robert Rosen. Recall that Gödel was able to conclude that mathematics is incomplete from the use of self-reference, as a contradiction can be generated within a set of meta-mathematical statements. Although Rosen appeals to syntax and semantics in Anticipatory Systems, the broader sense is about the differences between natural systems and formal systems. My ultimate goal is to articulate this relationship and its implications in more general terms, with a particular focus on comparing AI and machines to humans and animals. So far, I’ve been able to sketch some themes and ideas in relation to Rosen and this relationship, and much more work is required to be able to put into words the ideas which only exist as intuitions. For now, however, I will document the process of how this all comes together because the externalization of ideas will foster their articulation.

Though Rosen appeals to language, language is merely an attempt at portraying elements of the world as understood by its author or speaker. Maturana’s passage is the missing link in a wider explanation of the phenomenon in question. Where does this incompleteness come from? Why is it that AI cannot ontologically compete with human intellect? The answer has to do with scope and the way wholes can be greater than the sum of their parts.

In biology, organisms are made up of various self-organizing processes which aim to support the continued survival of the individual. Although comprised of nested levels of physiological processes, a person is greater than the sum total of his physicality. In some ways, the idea of self is highly complex and philosophically dense, but seen through the lens of biology, the self refers to an individual as contained by its own body. All living things have a boundary for which processes take place inside, delineating it from the rest of its environment as a unit. Arguably, the nervous system evolved to provide individuals with information about its internal and external environments for the sake of continued survival. By responding to changes in the environment, the individual can take actions which mitigate these changes.

Physiological processes can be described by a sequential series of steps or actions taken within some system. In Rosen’s terms, a formal system can be generated from a natural system, however, it generates an abstraction which ignores all but the elements necessary for producing some outcome or end state. For example, when it comes to predicting tomorrow’s temperature, some geological elements will be taken into consideration, such as wind patterns and atmospheric moisture levels, however, other aspects of the Earth can be ignored as they don’t influence how temperatures manifest. Perhaps something related to plate tectonics or spruce tree populations. When scientists generate weather and climate models, they only include variables which impact the systems they are interested in studying. The model, as described by mathematics, can be seen as a set of relations and calculations which provides an output, and in this way, exists as a sequence of steps to be taken. If one were to write out these steps, they’d have something which resembles an algorithm or piece of computer code.

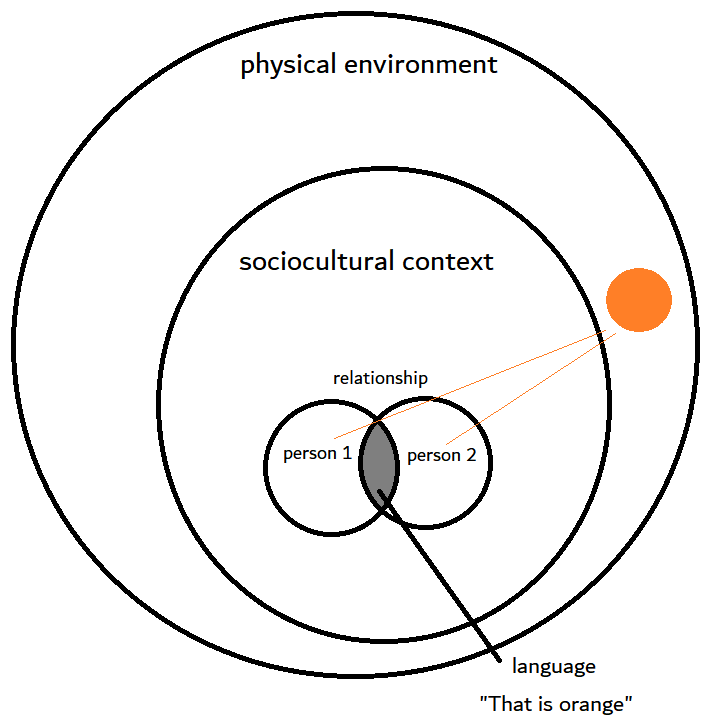

If additional information is required which has not been accounted for the model, it would therefore be inaccessible as it remains beyond the scope of the existing model. In some cases, the model can be expanded to include this variable, say including spruce tree populations, however, Rosen’s point is that no amount of augmentation will provide a model which completely represents the natural system in question. It will always contain aspects which cannot be properly accounted for by formal systems, and the example he uses is semantics. This becomes apparent with indexicals, as ‘me’ or ‘today’ is rather difficult to articulate without appealing to the wider context or situation where it is used. To understand when or who is being referred to, the interpreter must appeal to their knowledge and understanding to fill in the blank, moving beyond the words themselves.

These ideas of circularity and sequential steps had me thinking of the rod and the ring again. I made a connection to this apparent duality in another post; lo and behold, here it is again. In fact, I’ve made reference to a number of blog entries within this very post, and as such, we see self-organization and coalescence here too. All of these writings, however, are made up of a series of passages, sentences which attempt to present ideas in a sequential form. As a relatively formless mass, for now at least, the ideas presented here and in other posts currently exist as a nebulous collection of related topics. One day, I hope to turn it into a more linear and organized argument which doesn’t frustrate the reader as much as it surely does now. “Where are you going with this…??” Something to do with nested systems, parts and wholes, and how self-organizing systems can be described as a series of linear steps without being reducible to them.

How to expand outward beyond the current scope? Self-reflection. In fact, our capacity for self-reflection was probably made possible from our social nature. Others act as a mirror for which we can see ourselves through the eyes of someone else. The mirror-image is metaphorically reversed though, as we see ourselves from a new perspective, one coming from the outside-in rather than the inside-out. I’ve been thinking about Kant’s transcendental self lately but this is a topic for another day.

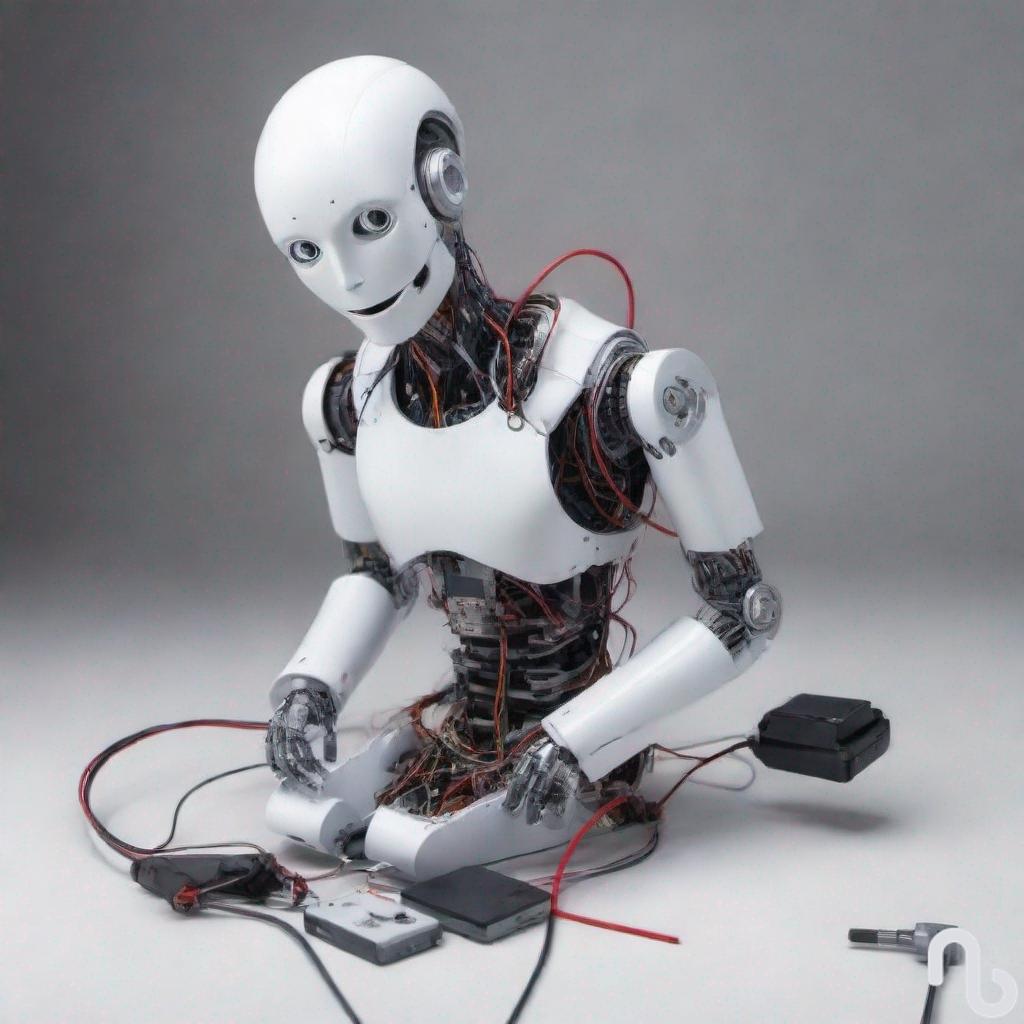

All of this is for an argument about why we shouldn’t give robots and AIs rights and legal considerations. They are simply not the kinds of things which are deserving of rights because they are functionally distinct from humans, animals, and other living beings. Their essential nature is linear and sequential, not autopoietic. This distinction is not just other but ontologically lesser, a reduction arising from formal systems and human creation. As such, they pale in comparison to the complex systems observed in nature.